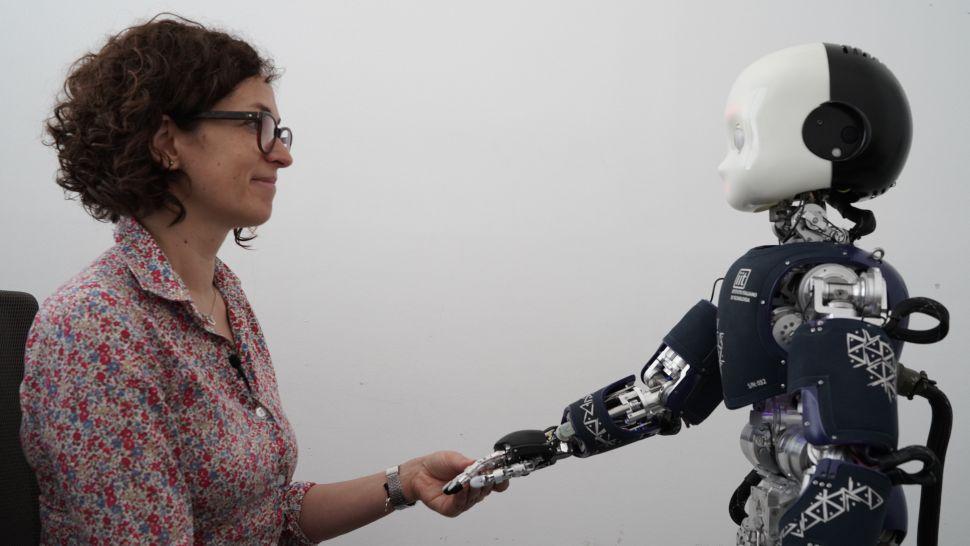

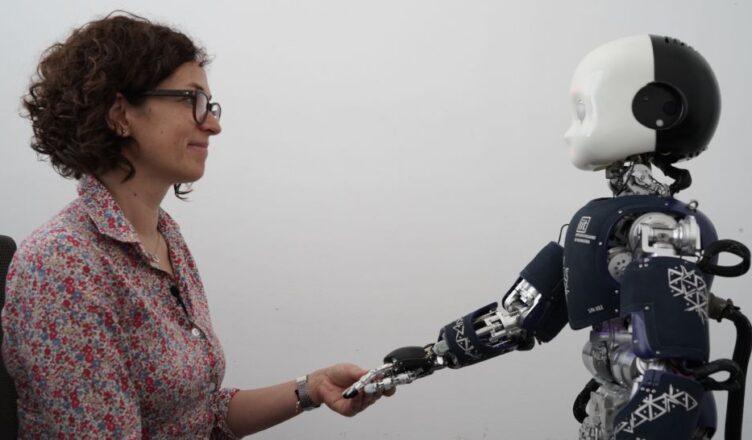

Device reportedly learned to recognize itself within its physical environment.

A team of U.S.-based scientists is claiming to have developed an artificial intelligence that has achieved a degree of self-awareness — an accomplishment that, if true, would indicate a major leap forward for AI technology.

The researchers said in a New Scientist report this week that they have been working to get machines to “think about themselves,” specifically in the context of their physical environments.

To accomplish that end they surrounded a neural network-connected robot with five cameras and allowed the device to observe its own movements for several hours. The neural network could subsequently predict, with a high degree of accuracy, where the robot’s mechanical arm would be in the physical space if the robot moved it.

“To me, this is the first time in the history of robotics that a robot has been able to create a mental model of itself,” Columbia University researcher Hod Lipson told the outlet. “It’s a small step, but it’s a sign of things to come.”

Lipson suggested that full human-like self-awareness for a robot is still several decades away. Other researchers, meanwhile, expressed skepticism at even these early claims of self-awareness.

“The computer simply matches shape and motion patterns that happen to be in the shape of a robot arm that moves,” the Georgia Institute of Technology’s Andrew Hundt told New Scientist.